Experimented with different samples from Superdirt folder and came up with something I thought sounded nice.

When we begin to think of the laptop as a musical instrument, similar to a guitar or piano, live coding takes on a more traditional musical meaning. In my own practice as a CS major, especially in this class, I often rely on pre-written scripts, making only small adjustments or sometimes simply running code and still considering it “live.” However, the paper challenges this assumption by emphasizing that liveness is not defined by the presence of a performer, but by real-time decision-making and compositional activity. This places practices like Deadmau5’s performance—where much is pre-structured—on a different end of the spectrum from live coding. Instead, live coding aligns more closely with improvisational traditions, such as those represented by Derek Bailey, where creation happens in the moment.

Now that we are required to do live coding sessions as a group, it pushes me away from heavy pre-planning and forces me to engage more directly with the code in real time with my group members. This shift makes the process feel much more aligned with the paper’s idea of liveness, allowing us to respond to each other and build something on the fly rather than relying on pre-written structures.

The first thing you notice is that the document itself doesn’t look like a normal academic paper. It’s filled with visual noise, fragmented text, and off-margin layouts that act like a glitch. This non-traditional format perfectly sets up her argument that we shouldn’t just ignore the “black box” of our computers and other interesting comments about glitch in the paper.

Menkman describes the glitch as an “exoskeleton of progress,” saying these technical interruptions are actually “wonderful” experiences. When a computer fails, we move from a “negative feeling” to an “intimate, personal experience” where the system finally shows its “inner workings and flaws”. Usually, we use technology so fast that it feels transparent, but a glitch breaks that flow and forces us to be “shocked, lost and in awe” of what the machine is actually doing.

One of the most interesting points she makes is that a glitch is “ephemeral”. The second you “name” a glitch or understand how it was created, its “momentum” is gone. It stops being a mysterious rupture and just becomes a new set of conditions or a “domesticated” tool. For Menkman, the real value of computer noise isn’t in fixing it, but in that brief moment of “devastation” where a “spark of creative energy” shows us that the machine can be something more than what it was programmed to be.

In my Tidal code (composition.tidal), I grouped the audio patterns into named blocks like intro, melody, and transition. This made it easy to perform the piece by triggering different parts, moving from a calm beginning to a rhythmic middle section, and finishing with a bright ending. To connect the audio and visuals, Tidal sends MIDI signals to my Hydra code (composition.js). Hydra uses these signals to control a sequence of four background videos and apply visual effects that react directly to the beat. For example, the kick drum changes the video’s contrast, while other musical elements control effects like rotation, pixelation, and scaling. This setup ensures that all the visual changes are perfectly synchronized with the music. Though I synced the visuals with the tidal sound via ccns, for the background chords I used timing in the .js file, similar to what I did in the .tidal for these chords, customizing the chord times with sustain.

Tidal Code:

setcps (0.5)

let intro = do

resetCycles

d9 $ ccv "127"

# ccn "2"

# s "midi"

d1 $ slow 16 $ s "supersquare"

<| n "c'min7 f'min7 bf'maj7 g'dom7 c'min7 f'min7 bf'maj7 g4'dom7"

# lpf 400

# gain 0.9

# sustain 4

let bd = do

d2 $ struct "t f f t@t f f t" $ s "808bd*4"

# gain 2

# room 0.8

d8 $ struct "t f f t@t f f t" $ ccv "127 0 127 0"

# ccn "1"

# s "midi"

let bd2 = do

d2 $ struct "t f f t@t f f t" $ s "808bd*4"

# gain 2

# room 0.5

d8 $ struct "t f f t@t f f t" $ ccv "127 0 127 0"

# ccn "1"

# s "midi"

let melody = do

d3 $ s "simplesine"

>| note ((scale "major" "<[[0 5 10 7] [0 5 10 -5]] [[0 0 5 ~ 10 ~ 7 ~] [0 5 ~ -5]]>"))

# room 0.6

#gain 0.9

d10 $ struct "t ~ t ~ t ~ t ~" $ ccv "127 0 127 0"

# ccn "3"

# s "midi"

let shimmer = do

d4 $ s "simplesine"

>| note ((scale "major" "<[12 14 15 17] [12 ~ 15 ~]>"))

# gain 0.8

# room 0.8

# lpf 2000

let melody2 = do

d5 $ s "simplesine"

>| note ((scale "major" "<[[3 7 5 10] [3 ~ 7 ~]] [[5 3 7 ~ 5 ~] [7 5 ~ 3]]>"))

# room 0.5

# gain 0.9

# delay 0.2

# delaytime 0.25

let rise = do

d6 $ qtrigger $ filterWhen (>=0) $ slow 2 $

s "supersquare*32"

# note (range (-24) 12 saw)

# sustain 0.08

# hpf (range 180 4200 saw)

# lpf 9000

# room 0.45

# gain (range 0.8 1.15 saw)

let stop = do

d1 silence

d2 silence

d3 silence

d4 silence

d5 silence

d6 silence

d7 silence

d8 silence

d9 $ ccv "0"

# ccn "2"

# s "midi"

d10 $ ccv "0"

# ccn "3"

# s "midi"

d11 $ ccv "0"

# ccn "4"

# s "midi"

d12 $ ccv "0"

# ccn "5"

# s "midi"

let pauseStart = do

d1 silence

d2 silence

d3 silence

d8 silence

d9 $ ccv "0"

# ccn "2"

# s "midi"

d10 $ ccv "0"

# ccn "3"

# s "midi"

let transition = do

d6 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0,2, slow 2 $

s "supersquare*32"

# note (range (-24) 12 saw)

# sustain 0.08

# hpf (range 180 4200 saw)

# lpf 9000

# room 0.45

# gain (range 0.8 1.15 saw)

)

, (2,64, silence)

]

d7 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0,2, silence)

, (2,2.25, stack

[ s "808bd*16" # gain 3.6 # speed 0.6 # crush 3 # distort 0.35 # room 0.3 # size 0.8

, s "crash*8" # gain 1.8 # speed 0.8 # room 0.35

, s "noise2:2*8" # gain 1.5 # hpf 1200 # room 0.25 # size 0.6

, s "supersquare" # note "-24" # sustain 0.35 # lpf 220 # gain 1.9

])

, (2.25,64, silence)

]

d11 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2.25, ccv "127" # ccn "4" # s "midi")

, (2.25, 64, ccv "0" # ccn "4" # s "midi")

]

let mid = do

d1 $ stack

[ fast 2 $ s "glitch*4"

# gain 0.7

# room 0.2

, fast 2 $ s "bass 808bd 808bd <bass realclaps:3>"

# gain 0.8

# room 0.2

, fast 2 $ s "hh*8"

# gain 0.75

]

d2 $ slow 2 $ s "arpy"

<| up "c'min7(5,8) ~ f'maj5(5,8,3) bf4'min7(3,8)"

# room 0.45

# gain 0.9

d3 $ slow 2 $ s "feelfx"

<| up "c'min7(5,8) ~ f'maj5(5,8,3) bf4'min7(3,8)"

# hpf 800

# room 0.6

# gain 0.45

d4 silence

d5 silence

d6 silence

d7 silence

d8 silence

d9 $ ccv "0"

# ccn "2"

# s "midi"

d10 $ ccv "0"

# ccn "3"

# s "midi"

d11 $ ccv "0"

# ccn "4"

# s "midi"

d12 $ ccv "127"

# ccn "5"

# s "midi"

let transitionAndPause = do

transition

d1 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, slow 16 $ s "supersquare"

<| n "c'min7 f'min7 bf'maj7 g'dom7 c'min7 f'min7 bf'maj7 g4'dom7"

# lpf 400 # gain 0.9 # sustain 4)

, (2, 64, silence)

]

d2 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, struct "t f f t@t f f t" $ s "808bd*4" # gain 2 # room 0.8)

, (2, 64, silence)

]

d3 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, s "simplesine"

>| note ((scale "major" "<[[0 5 10 7] [0 5 10 -5]] [[0 0 5 ~ 10 ~ 7 ~] [0 5 ~ -5]]>"))

# room 0.6 # gain 0.9)

, (2, 64, silence)

]

d8 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, struct "t f f t@t f f t" $ ccv "127 0 127 0" # ccn "1" # s "midi")

, (2, 64, ccv "0" # ccn "1" # s "midi")

]

d9 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, ccv "127" # ccn "2" # s "midi")

, (2, 64, ccv "0" # ccn "2" # s "midi")

]

d10 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, struct "t ~ t ~ t ~ t ~" $ ccv "127 0 127 0" # ccn "3" # s "midi")

, (2, 64, ccv "0" # ccn "3" # s "midi")

]

--start

intro

bd

melody

--transition

transitionAndPause

mid

stop

--final

shimmer

melody2

bd2

intro

d4 silence

d5 silence

d6 silence

d7 silence

d8 silence

d3 silence

d2 silence

d9 $ ccv "0" # ccn "2" # s "midi"

d1 silence

hush

JS Code:

hush()

solid(0,0,0,1).out(o0)

if (typeof ccActual === "undefined") {

loadScript("/Users/rekas/Documents/NYUAD/SeniorSpring/Livecoding/liveCoding/midi.js")

}

let basePath = "/Users/rekas/Documents/NYUAD/SeniorSpring/Livecoding/vids/"

let videoNames = []

let vids = []

let allLoaded = false

let loadedCount = 0

let whichVid = 0

let switchEverySeconds = 4

let introStartTime = 0

let introRunning = false

let beatEnv = 0

let prevIntroGate = false

let introArmed = false

let initVideos = () => {

videoNames = [

basePath + "vid1.mp4",

basePath + "vid2.mp4",

basePath + "vid3.mp4",

basePath + "vid4.mp4"

]

vids = []

allLoaded = false

loadedCount = 0

whichVid = 0

introStartTime = 0

introRunning = false

prevIntroGate = false

introArmed = false

beatEnv = 0

for (let i = 0; i < videoNames.length; i++) {

vids[i] = document.createElement("video")

vids[i].autoplay = true

vids[i].loop = true

vids[i].muted = true

vids[i].playsInline = true

vids[i].crossOrigin = "anonymous"

vids[i].src = videoNames[i]

vids[i].addEventListener("loadeddata", function () {

loadedCount += 1

vids[i].play().catch(() => {})

if (loadedCount === videoNames.length) {

allLoaded = true

}

}, false)

}

if (vids[0]) {

s0.init({src: vids[0]})

vids[0].currentTime = 0

vids[0].playbackRate = 1

vids[0].play().catch(() => {})

}

}

let getCcNorm = (index) => {

if (typeof ccActual !== "undefined" && Array.isArray(ccActual)) {

return Math.max(0, Math.min(1, ccActual[index] / 127))

}

if (typeof cc !== "undefined" && Array.isArray(cc)) {

return Math.max(0, Math.min(1, cc[index]))

}

return 0

}

let switchToVid = (index, force = false) => {

let safeIndex = Math.max(0, Math.min(vids.length - 1, index))

if (!force && safeIndex === whichVid) return

whichVid = safeIndex

let nextVid = vids[whichVid]

if (!nextVid) return

nextVid.currentTime = 0

nextVid.playbackRate = 1

s0.init({src: nextVid})

nextVid.play().catch(() => {})

}

update = () => {

if (!vids || vids.length === 0) return

let introGate = getCcNorm(2) > 0.5

if (!introGate) {

introArmed = true

}

if (introArmed && introGate && !prevIntroGate) {

introStartTime = time

switchToVid(0, true)

}

introRunning = introGate

prevIntroGate = introGate

//4s per video when intro is on

if (introRunning) {

let elapsed = Math.max(0, time - introStartTime)

let nextIndex = Math.floor(elapsed / switchEverySeconds) % vids.length

switchToVid(nextIndex)

}

beatEnv = Math.max(beatEnv * 0.82, getCcNorm(1))

}

src(s0)

.blend(src(s0).invert(), () => getCcNorm(1) > 0.55 ? 0.95 : 0)

.rotate(() => getCcNorm(3) > 0.55 ? 0.12 : 0)

.scale(() => getCcNorm(4) > 0.55 ? 1.4 : 1)

.modulate(noise(3), () => getCcNorm(4) > 0.55 ? 0.08 : 0)

.pixelate(

() => getCcNorm(5) > 0.55 ? 30 : 2000,

() => getCcNorm(5) > 0.55 ? 30 : 2000

)

.colorama(() => getCcNorm(5) > 0.55 ? 0.04 : 0)

.contrast(() => 1 + beatEnv * 0.08)

.out(o0)

initVideos()

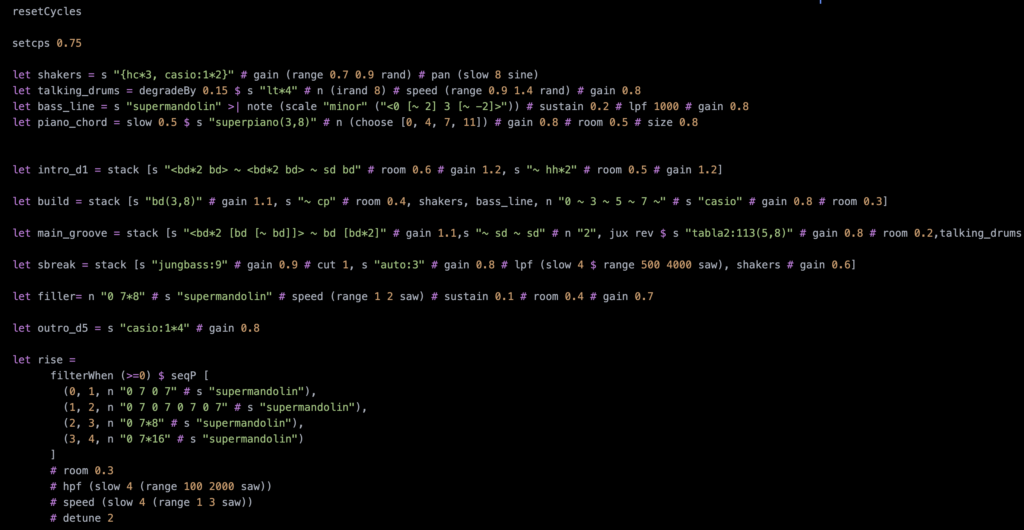

I began the composition with a simple drum and snare pattern, initially aiming to come up with an Afrobeat composition. But ended up with something completely different which sort of sounded good to me, so I chose to follow that direction. The structure follows an Intro → A → Rise → B → A → B. The composition is organized using timed sequences and multiple channels to allow me control individual layers. MIDI patterns are used to drive Hydra visuals, but this was quite challenging as my entire visual setup is made of videos. Getting them to sync with the pattern from the midi was, and is still very challenging.

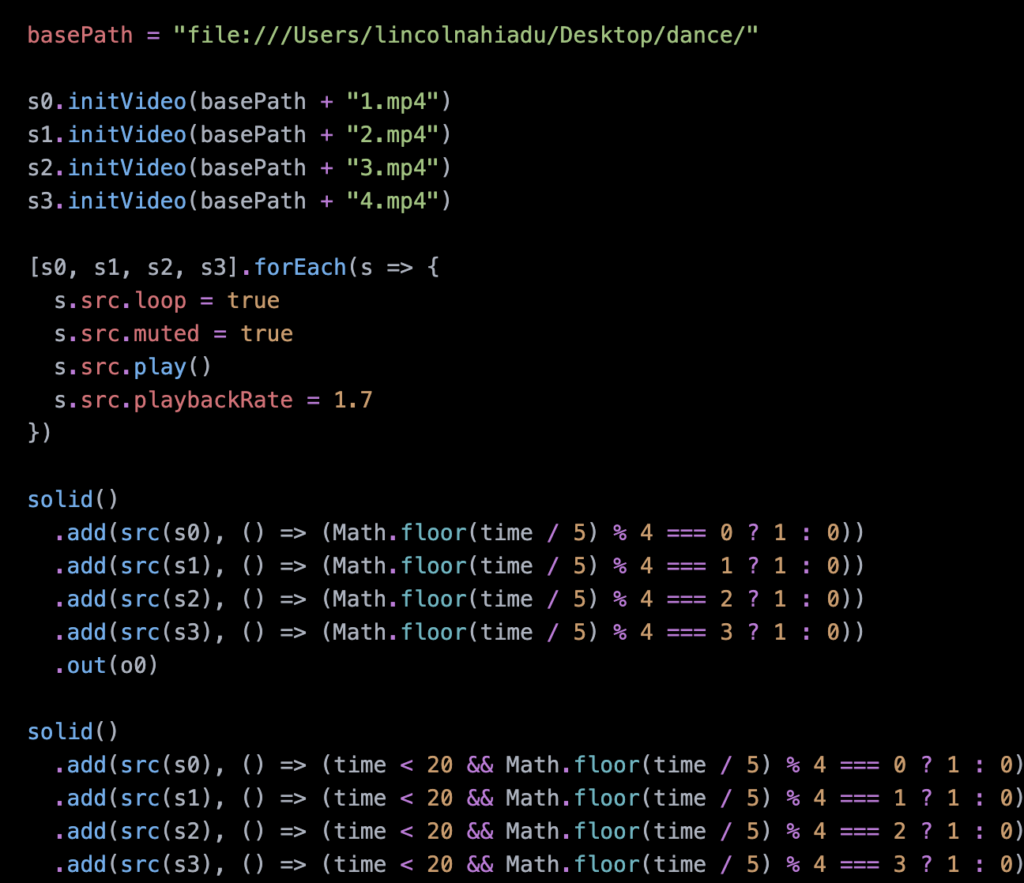

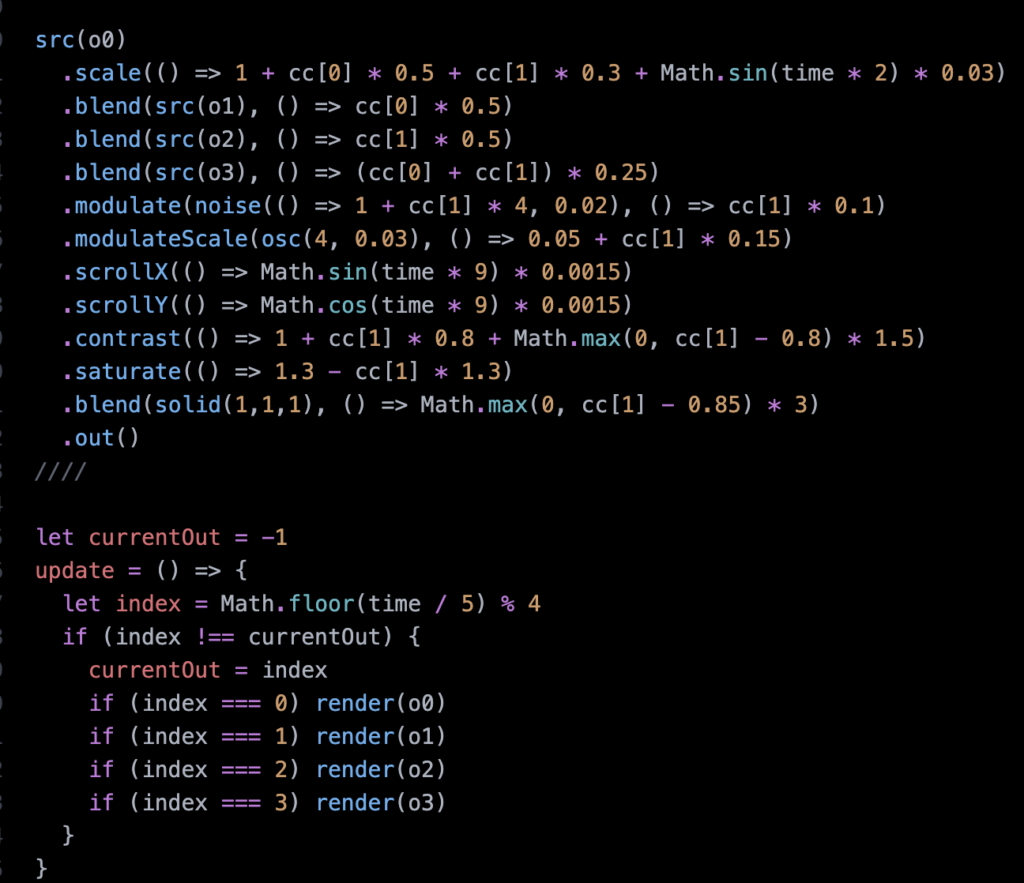

Code snippets:

Hydra visuals

Tidal snippets:

What I loved about this reading the most is Kurokawa’s approach to presenting his art. His content is very cohesive and well researched in all his projects but he curates his own pieces in a way that just looking at the artwork is an experience itself. He says he does not have synesthesia himself but he creates experiences keeping the principle in mind. He focuses on the implicit interactions that occur during an experience; the eyes seeing the beat of the music, the body feeling the vibrations of the light and the ears associating visuals with the sounds. I would imagine he would be really hard to work with for curators because he has such a strong vision for how his artwork is meant to be shown. But at the same time, I love his intentionality and direction. I really admire how he’s able to take the chaotic-ness of nature and our environment, and express it in its full abrasive glory while using simple interactions to immerse the audience in his world.

What I find most interesting is Kurokawa’s statement, “Nature is disorder. I like to use nature to create order… I like to denature.” I have always thought of nature as a form of order — ecosystems functioning in balance, patterns repeating, and life sustaining itself without human interference. In contrast, I tend to see human beings as the source of disruption and chaos. That is why his perspective feels so unexpected to me. By describing nature as disorder, he shifts the responsibility of structure and organization onto the artist. It suggests that order does not simply exist waiting to be admired; it can be constructed through interpretation and design