Practice 1:

After reading Rosa Menkman’s Glitch Studies Manifesto, what stands out most is her argument that we should stop viewing technological errors simply as problems that need to be fixed. Instead of constantly chasing the impossible goal of a perfect, invisible interface, Menkman suggests that glitches and digital noise actually give us a valuable peek behind the curtain of our technology. When a system breaks, it interrupts our blind trust and reveals the hidden rules, biases, and flaws built into the software we use every day. I really appreciate how the manifesto frames these moments of technical failure not as a dead end, but as a creative spark, a chance to bend the rules, question the digital systems that govern our lives, and build something entirely new out of the broken pieces.

In my Tidal code (composition.tidal), I grouped the audio patterns into named blocks like intro, melody, and transition. This made it easy to perform the piece by triggering different parts, moving from a calm beginning to a rhythmic middle section, and finishing with a bright ending. To connect the audio and visuals, Tidal sends MIDI signals to my Hydra code (composition.js). Hydra uses these signals to control a sequence of four background videos and apply visual effects that react directly to the beat. For example, the kick drum changes the video’s contrast, while other musical elements control effects like rotation, pixelation, and scaling. This setup ensures that all the visual changes are perfectly synchronized with the music. Though I synced the visuals with the tidal sound via ccns, for the background chords I used timing in the .js file, similar to what I did in the .tidal for these chords, customizing the chord times with sustain.

Tidal Code:

setcps (0.5)

let intro = do

resetCycles

d9 $ ccv "127"

# ccn "2"

# s "midi"

d1 $ slow 16 $ s "supersquare"

<| n "c'min7 f'min7 bf'maj7 g'dom7 c'min7 f'min7 bf'maj7 g4'dom7"

# lpf 400

# gain 0.9

# sustain 4

let bd = do

d2 $ struct "t f f t@t f f t" $ s "808bd*4"

# gain 2

# room 0.8

d8 $ struct "t f f t@t f f t" $ ccv "127 0 127 0"

# ccn "1"

# s "midi"

let bd2 = do

d2 $ struct "t f f t@t f f t" $ s "808bd*4"

# gain 2

# room 0.5

d8 $ struct "t f f t@t f f t" $ ccv "127 0 127 0"

# ccn "1"

# s "midi"

let melody = do

d3 $ s "simplesine"

>| note ((scale "major" "<[[0 5 10 7] [0 5 10 -5]] [[0 0 5 ~ 10 ~ 7 ~] [0 5 ~ -5]]>"))

# room 0.6

#gain 0.9

d10 $ struct "t ~ t ~ t ~ t ~" $ ccv "127 0 127 0"

# ccn "3"

# s "midi"

let shimmer = do

d4 $ s "simplesine"

>| note ((scale "major" "<[12 14 15 17] [12 ~ 15 ~]>"))

# gain 0.8

# room 0.8

# lpf 2000

let melody2 = do

d5 $ s "simplesine"

>| note ((scale "major" "<[[3 7 5 10] [3 ~ 7 ~]] [[5 3 7 ~ 5 ~] [7 5 ~ 3]]>"))

# room 0.5

# gain 0.9

# delay 0.2

# delaytime 0.25

let rise = do

d6 $ qtrigger $ filterWhen (>=0) $ slow 2 $

s "supersquare*32"

# note (range (-24) 12 saw)

# sustain 0.08

# hpf (range 180 4200 saw)

# lpf 9000

# room 0.45

# gain (range 0.8 1.15 saw)

let stop = do

d1 silence

d2 silence

d3 silence

d4 silence

d5 silence

d6 silence

d7 silence

d8 silence

d9 $ ccv "0"

# ccn "2"

# s "midi"

d10 $ ccv "0"

# ccn "3"

# s "midi"

d11 $ ccv "0"

# ccn "4"

# s "midi"

d12 $ ccv "0"

# ccn "5"

# s "midi"

let pauseStart = do

d1 silence

d2 silence

d3 silence

d8 silence

d9 $ ccv "0"

# ccn "2"

# s "midi"

d10 $ ccv "0"

# ccn "3"

# s "midi"

let transition = do

d6 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0,2, slow 2 $

s "supersquare*32"

# note (range (-24) 12 saw)

# sustain 0.08

# hpf (range 180 4200 saw)

# lpf 9000

# room 0.45

# gain (range 0.8 1.15 saw)

)

, (2,64, silence)

]

d7 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0,2, silence)

, (2,2.25, stack

[ s "808bd*16" # gain 3.6 # speed 0.6 # crush 3 # distort 0.35 # room 0.3 # size 0.8

, s "crash*8" # gain 1.8 # speed 0.8 # room 0.35

, s "noise2:2*8" # gain 1.5 # hpf 1200 # room 0.25 # size 0.6

, s "supersquare" # note "-24" # sustain 0.35 # lpf 220 # gain 1.9

])

, (2.25,64, silence)

]

d11 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2.25, ccv "127" # ccn "4" # s "midi")

, (2.25, 64, ccv "0" # ccn "4" # s "midi")

]

let mid = do

d1 $ stack

[ fast 2 $ s "glitch*4"

# gain 0.7

# room 0.2

, fast 2 $ s "bass 808bd 808bd <bass realclaps:3>"

# gain 0.8

# room 0.2

, fast 2 $ s "hh*8"

# gain 0.75

]

d2 $ slow 2 $ s "arpy"

<| up "c'min7(5,8) ~ f'maj5(5,8,3) bf4'min7(3,8)"

# room 0.45

# gain 0.9

d3 $ slow 2 $ s "feelfx"

<| up "c'min7(5,8) ~ f'maj5(5,8,3) bf4'min7(3,8)"

# hpf 800

# room 0.6

# gain 0.45

d4 silence

d5 silence

d6 silence

d7 silence

d8 silence

d9 $ ccv "0"

# ccn "2"

# s "midi"

d10 $ ccv "0"

# ccn "3"

# s "midi"

d11 $ ccv "0"

# ccn "4"

# s "midi"

d12 $ ccv "127"

# ccn "5"

# s "midi"

let transitionAndPause = do

transition

d1 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, slow 16 $ s "supersquare"

<| n "c'min7 f'min7 bf'maj7 g'dom7 c'min7 f'min7 bf'maj7 g4'dom7"

# lpf 400 # gain 0.9 # sustain 4)

, (2, 64, silence)

]

d2 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, struct "t f f t@t f f t" $ s "808bd*4" # gain 2 # room 0.8)

, (2, 64, silence)

]

d3 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, s "simplesine"

>| note ((scale "major" "<[[0 5 10 7] [0 5 10 -5]] [[0 0 5 ~ 10 ~ 7 ~] [0 5 ~ -5]]>"))

# room 0.6 # gain 0.9)

, (2, 64, silence)

]

d8 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, struct "t f f t@t f f t" $ ccv "127 0 127 0" # ccn "1" # s "midi")

, (2, 64, ccv "0" # ccn "1" # s "midi")

]

d9 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, ccv "127" # ccn "2" # s "midi")

, (2, 64, ccv "0" # ccn "2" # s "midi")

]

d10 $ qtrigger $ filterWhen (>=0) $ seqP

[ (0, 2, struct "t ~ t ~ t ~ t ~" $ ccv "127 0 127 0" # ccn "3" # s "midi")

, (2, 64, ccv "0" # ccn "3" # s "midi")

]

--start

intro

bd

melody

--transition

transitionAndPause

mid

stop

--final

shimmer

melody2

bd2

intro

d4 silence

d5 silence

d6 silence

d7 silence

d8 silence

d3 silence

d2 silence

d9 $ ccv "0" # ccn "2" # s "midi"

d1 silence

hush

JS Code:

hush()

solid(0,0,0,1).out(o0)

if (typeof ccActual === "undefined") {

loadScript("/Users/rekas/Documents/NYUAD/SeniorSpring/Livecoding/liveCoding/midi.js")

}

let basePath = "/Users/rekas/Documents/NYUAD/SeniorSpring/Livecoding/vids/"

let videoNames = []

let vids = []

let allLoaded = false

let loadedCount = 0

let whichVid = 0

let switchEverySeconds = 4

let introStartTime = 0

let introRunning = false

let beatEnv = 0

let prevIntroGate = false

let introArmed = false

let initVideos = () => {

videoNames = [

basePath + "vid1.mp4",

basePath + "vid2.mp4",

basePath + "vid3.mp4",

basePath + "vid4.mp4"

]

vids = []

allLoaded = false

loadedCount = 0

whichVid = 0

introStartTime = 0

introRunning = false

prevIntroGate = false

introArmed = false

beatEnv = 0

for (let i = 0; i < videoNames.length; i++) {

vids[i] = document.createElement("video")

vids[i].autoplay = true

vids[i].loop = true

vids[i].muted = true

vids[i].playsInline = true

vids[i].crossOrigin = "anonymous"

vids[i].src = videoNames[i]

vids[i].addEventListener("loadeddata", function () {

loadedCount += 1

vids[i].play().catch(() => {})

if (loadedCount === videoNames.length) {

allLoaded = true

}

}, false)

}

if (vids[0]) {

s0.init({src: vids[0]})

vids[0].currentTime = 0

vids[0].playbackRate = 1

vids[0].play().catch(() => {})

}

}

let getCcNorm = (index) => {

if (typeof ccActual !== "undefined" && Array.isArray(ccActual)) {

return Math.max(0, Math.min(1, ccActual[index] / 127))

}

if (typeof cc !== "undefined" && Array.isArray(cc)) {

return Math.max(0, Math.min(1, cc[index]))

}

return 0

}

let switchToVid = (index, force = false) => {

let safeIndex = Math.max(0, Math.min(vids.length - 1, index))

if (!force && safeIndex === whichVid) return

whichVid = safeIndex

let nextVid = vids[whichVid]

if (!nextVid) return

nextVid.currentTime = 0

nextVid.playbackRate = 1

s0.init({src: nextVid})

nextVid.play().catch(() => {})

}

update = () => {

if (!vids || vids.length === 0) return

let introGate = getCcNorm(2) > 0.5

if (!introGate) {

introArmed = true

}

if (introArmed && introGate && !prevIntroGate) {

introStartTime = time

switchToVid(0, true)

}

introRunning = introGate

prevIntroGate = introGate

//4s per video when intro is on

if (introRunning) {

let elapsed = Math.max(0, time - introStartTime)

let nextIndex = Math.floor(elapsed / switchEverySeconds) % vids.length

switchToVid(nextIndex)

}

beatEnv = Math.max(beatEnv * 0.82, getCcNorm(1))

}

src(s0)

.blend(src(s0).invert(), () => getCcNorm(1) > 0.55 ? 0.95 : 0)

.rotate(() => getCcNorm(3) > 0.55 ? 0.12 : 0)

.scale(() => getCcNorm(4) > 0.55 ? 1.4 : 1)

.modulate(noise(3), () => getCcNorm(4) > 0.55 ? 0.08 : 0)

.pixelate(

() => getCcNorm(5) > 0.55 ? 30 : 2000,

() => getCcNorm(5) > 0.55 ? 30 : 2000

)

.colorama(() => getCcNorm(5) > 0.55 ? 0.04 : 0)

.contrast(() => 1 + beatEnv * 0.08)

.out(o0)

initVideos()

I was fascinated by his focus on synesthesia and the deconstruction of nature. He takes the natural randomness of our environment, such as the chaotic motion of microscopic particles, and translates it into highly controlled, digital audiovisual experiences. By acting as a “time designer,” Kurokawa ensures his pieces never just dissolve into a mess of noise. Instead, he carefully layers real-world field recordings with computer-generated graphics so that what you hear and what you see feels like a single, connected unit. This approach shows how we can use digital tools to completely rebuild our perception of the natural world.

Kurokawa’s style offers a great lesson on the importance of flow and artistic intention. When coding generative art, it is very easy for an algorithmic composition to lose its structure and overwhelm the audience. However, Kurokawa proves that by carefully guiding the transitions between order and disorder, an artist can successfully harness that chaos. His work is a powerful reminder that mastering the audio-visual flow is what transforms raw data and abstract code into a truly engaging and meaningful performance.

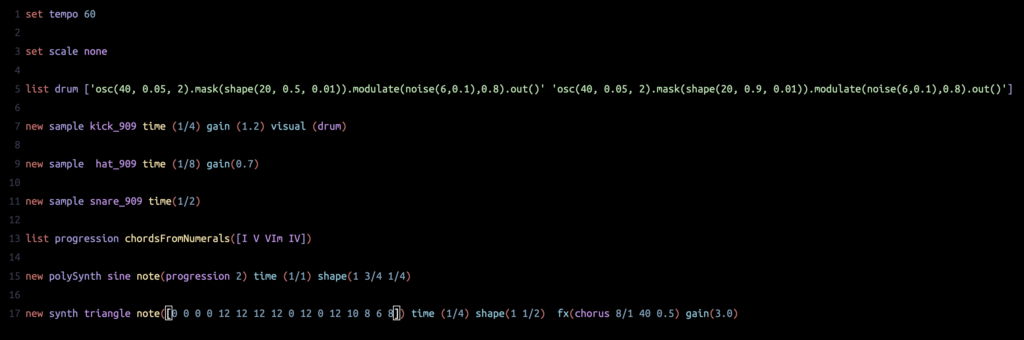

Mercury. A browser-based live coding environment created by Timo Hoogland. It is designed specifically to make algorithmic music performance human-readable and accessible to beginners. Unlike traditional programming languages that require complex syntax, Mercury uses a simplified, English-like structure (e.g., “new sample beat”), allowing the code to be understood by the audience as written.

Mercury operates as a high-level abstraction over the Web Audio API, running entirely in the browser without requiring external software or heavy audio engines. A key feature of the platform is its integrated audiovisual engine. It seamlessly connects audio generation with visual synthesis, often powered by Hydra, allowing performers to generate sound and 3D graphics simultaneously within a single interface. This design transforms the act of coding into a live, improvisational performance art, blurring the line between technical scripting and musical expression.

Video link: https://youtu.be/T5tb5NLn5DM

Culturally, I felt an immediate connection when the author cited Ghanaian percussionist C. K. Ladzekpo, noting that he would stop playing to chide students for playing without emotion. This validates something I have always felt while listening to music from home: the feel of a rhythm is not just about keeping time but about conveying a universe of expression through simple, repetitive patterns. The text articulates that this African and African-American aesthetic relies on microtiming, sensitivities to timing on the order of a few milliseconds.

However, reading this through a technical lens, I was fascinated by the author’s attempt to quantify soul. The explanation of the pocket as a specific backbeat delay, where the snare is played slightly later than the mathematical midpoint, was a revelation. It transforms an abstract emotional concept, playing laid back, into a programmable variable. The text says that understanding these minor adjustments is crucial to using computers to create rhythmically vital music. We often think of computers as tools for rigid quantization, but the author points to a gray area between bodily presence and electronic impossibility. If musical messages are passed through deviations from strict metricality, then the challenge for me as a programmer is not just to code the beat but to code the deviation. It suggests that, to make electronic music that feels alive, like the Afrobeats I grew up with, I need to treat the error not as a bug but as the most essential feature of the code.

Reading the excerpts on live coding, I found a powerful bridge between the rigorous, serious engineering of my Computer Science major and the immersive worlds of music and the cosmos where I love to get lost. The text describes live coding as a way to unthink the engineering of a day job, transforming the act of programming from a routine task into an “adventure and exploration” that feels akin to traversing the universe. As a senior from Ghana minoring in Interactive Media, I am inspred by how this practice turns the laptop into a “universal instrument”, allowing me to meld my technical background with my creative passions in a conversational flow that is as expressive and boundless as the music I adore.