Session 1

Session 2

Flok

Session 1

Session 2

Flok

I read this and all I felt was tired. Yes, glitch reveals that systems are not inevitable and impenetrable. Control is an illusion. Hurrah. Storms flatten houses. Rivers of gold ravage Pompeii. Nature subverts itself. Glitch is the trickster spirits and coyotes and spiders and monkey kings represent in endless mythologies. The devil Robert Johnson met on the Crossroads. We detect glitch and feel it as a resolutely involved presence, playmaker, and force in this stupid play. I remember a physicist researcher told me dark matter is like stumbling over something in pitch black. It’s Puck in A Midsummer Night’s Dream. Shakespeare knew all about glitch. I guess we are all sacrificial casualties on the pyre of glitch. That’s why it all seems so absurd, so tragic, so funny, so meaningful, so useless. You know when Puck revealed at the end that it was all a dream.

Everything I know and have ever loved is because of people who devoted themselves to what counts as glitch studies, those who were at the behest of glitches, and audiences that produced movements motivated by glitches, commodified, repackaged or otherwise. I don’t mean to be hypocritical and shit on the whole parade, but. The author is personally fascinated by the magic of glitch. Is your fascination contagious? They say they “believe that ‘Glitchspeak’ can democratize society.” Hm.

I don’t really want to be involved anymore. Unfortunately my skin is materially and literally in the game, so. The domestication and commodification of glitch blah blah blah. Glitch studies blah blah blah. Glitch is destructive generativity blah blah blah. I’ve become disenchanted with and disengaged from the gain and the loss, the sun rise and set, the confusion and the clarity. Glitch, ravage your course. I’ll laugh at the funny parts and cry at the sad ones.

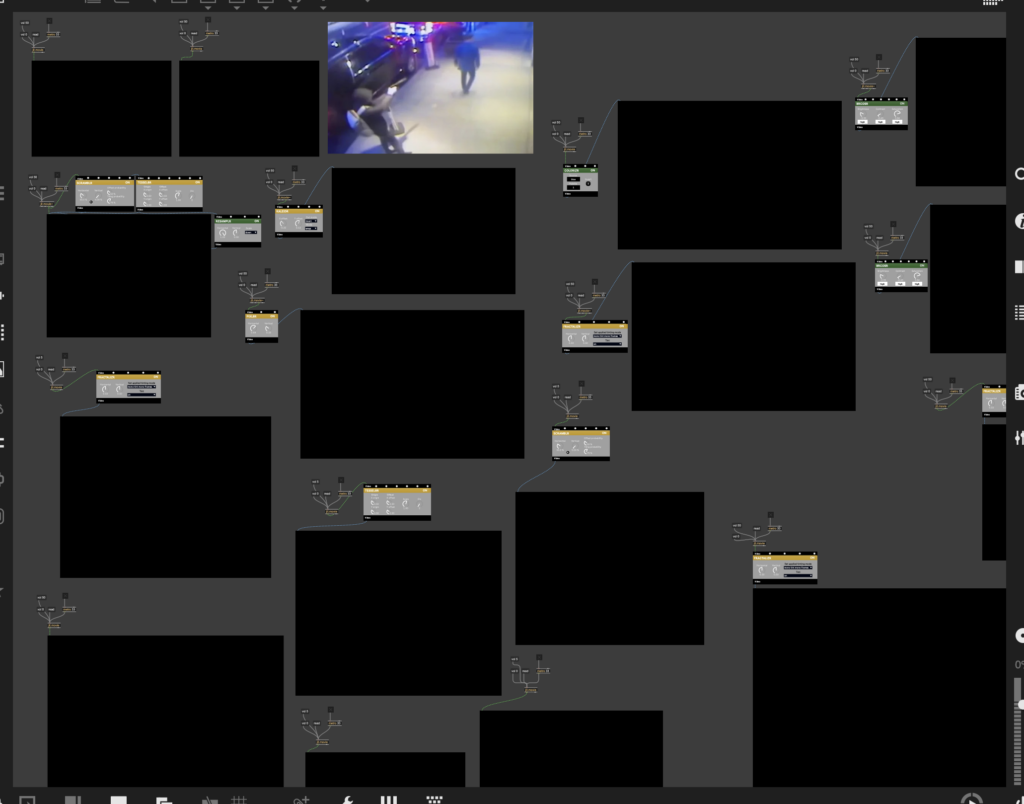

I tracked down old footage of my friends at a spot we used to frequent long ago. I wanted to evoke nostalgia and the disintegration of memory over time. Coming up with any visuals or sound at all was really difficult. There’s a pacifier wherever my imagination is supposed to be. I wish I had come up with some kind of climax and drop. I also wish I had been able to come up with a visual that syncs better with the arpeggios. I tried tweaking the numbers and couldn’t find the right rhythm to sync to. The entire thing is too chaotic and unbalanced to work as a composition. But at the end, I liked the silence paired with the normal videos, because it felt like reminiscence or reflection.

s0.initVideo("file:///Users/eloratrotter/Documents/Documents%20-%20Elora’s%20MacBook%20Pro/Code/liveCoding/media/fixed_video1.mp4")

src(s0).out(o0)

s0.initVideo("file:///Users/eloratrotter/Documents/Documents%20-%20Elora’s%20MacBook%20Pro/Code/liveCoding/media/fixed_Video.mp4")

src(s0).out(o0)

s0.initVideo("file:///Users/eloratrotter/Documents/Documents%20-%20Elora’s%20MacBook%20Pro/Code/liveCoding/media/fixed.mp4")

src(s0).out(o0)

s0.initVideo("file:///Users/eloratrotter/Documents/Documents%20-%20Elora’s%20MacBook%20Pro/Code/liveCoding/media/fixed_video2.mp4")

src(s0).out(o0)

src(s0).modulate(noise(.23, 0).pixelate(100, 100),)

.colorama(0.005).luma(0.20).diff(src(o0).scale(()=>cc[0])).out(o0)

hush()

setcps (85/60/4)

d1 $ slow 2 $ n "~ e7 c8 ~ b7 ~ <g7,g8> ~"

# s "supervibe"

# lpf 300

# sustain 2

# room 0.7

# delay 0.6 # delayt (1/4) # delayfb 0.5

# gain 1.2

d2 $ stack [

s "bd ~ bd ~",

s "~ sn:2 ~ sn:2" # lpf 2000 # shape 0.1 # gain 0.8,

s "hh*8" # gain "0.5 0.3 0.4 0.2" # pan rand # lpf 5000

] # room 0.4 # sz 0.6

d3 $ slow 4 $ n "a2 f3 c3 e3"

# s "superpiano"

# gain 0.9

d4 $ slow 4 $ n "a3'min9 f3'maj7 c3'maj7 e3'min7"

# s "superpiano"

# velocity 0.4

# lpf 1500

# room 0.9 # sz 0.8

d5 $ ccv "[0 127]*1" # ccn 0 # s "midi"

d6 $ jux (rev)

$ struct "t*16"

$ slow 4

$ n (arp "<up down updown diverge>" "<a3'min9 f3'maj7 c3'maj7 e3'min7>")

# s "supermandolin"

# sustain 1.5

# room 0.8 # sz 0.9

# delay 0.75 # delayt (1/4) # delayfb 0.5

# lpf (range 800 4000 $ slow 4 sine)

# pan (slow 3 sine)

# gain 0.8

d5 $ ccv "[0 127]*8" # ccn 0 # s "midi"

d6 $ jux (rev . (|- pan 0.2))

$ struct "t(3,8) t*2"

$ n (arp "up" "<a3'min9 f3'maj7 c3'maj7 e3'min7>")

# s "supermandolin"

# sustain 2

# detune 0.005

# lpf (range 400 3000 $ slow 8 saw)

# room 0.9 # sz 0.9

# gain 1

d5 $ struct "t(3,8) t*2" $ ccv "127 0 50"

# ccn 0 # s "midi"

hush

Humans have a natural instinct for macro and micro scales. See Rumi: “That I might behold an ocean in a drop of the water, a sun enclosed in a mote.” Kurokawa throws up the same prayer with his work. I started thinking about how the tools we use shape our conceptualizations of the nature of reality, how we cannot extricate the soul from the body (loosely related to synesthesia). Our visceral understanding of the configurations of nature became more quantitative – “Powers of Ten.” The presence of modern technology and scientific thought is strong. Rather than see man in God’s image, Eames noticed the planet as seen from a US-Russia-Space Race spaceship in a microscopic 1920s lab-possible cell. Despite the contextual distance, the spiritual epiphany is the same, and this is interesting to me.

Kurokawa’s disregard for the tools he uses was also interesting. Like in Powers of Ten, how we understand and what we create is shaped by the tools we use. I wanted to know more about what Kurokawa thinks about this relationship, the inherently mutually-constraining dynamic between his tools and what he creates. I get not being into tech-nostalgia (nostalgia has always seemed too navel-gazey for me). I don’t like my books because of the way they smell. I’ll read a pdf too. Just wanted to know more about this.

Even if he doesn’t care about the technology itself, and more about what kind of soul these devices can process and display, boy does he keep up just as fast as technology moves, huh? I was really interested in his setup. The iMac, the speakers, the mixing board, whatever the hell a “subwoofer” is, and the “X” where he can stand and scrutinize the total composition the way an audience in a theater will. I liked the idea of needing to move up close and back up, again and again. I really liked this “X.”

I also liked using “NASA topographic data to generate a video rendering of the Earth’s surface.” Just shows how many resources are at your disposal, if you can think of them. I also like how the reading mentions the sublime. I always thought of nature as an intelligent, subversive force. I also noted how Kurokawa uses a sketchbook to communicate ideas. It’s not all done on computers, but a lot of performance conceptualizations begin by being drawn by hand.

I also liked reading about the actual logistics that live performances take, like figuring out how to record a waterfall, or get your hands on dried insects, or balance 200 meters of cable in a historically old and valuable roof. Producing the live performance seems just as much as a live performance itself. I also never thought of films as pieces of audiovisual work, which they definitely are.

And mostly I feel like this reading was about tapping into something, and a brief insight into how Kurokawa taps in. What he taps into. Want to end on another Rumi quote I found while browsing for the first one: “Dost thou know why the mirror (of thy soul) reflects nothing? Because the rust is not cleared from its face.”

Max, also known as Max/MSP/Jitter, is a visual programming language for music and multimedia developed and maintained by software company Cycling ’74. Over its more than thirty-year history, it has been used by composers, performers, software designers, researchers, and artists to create recordings, performances, and installations. It offers a realtime multimedia programming environment where you build programs by connecting objects into a running graph, so time, signal flow, and interaction are visible in the structure of the patch. Miller Puckette began work on Max in 1985 to provide composers with a graphical interface for creating interactive computer music. Cycling ’74’s first Max release, in 1997, was derived partly from Puckette’s work on Pure Data. Called Max/MSP (“Max Signal Processing” or the initials Miller Smith Puckette), it remains the most notable of Max’s many extensions and incarnations: it made Max capable of manipulating real-time digital audio signals without dedicated DSP hardware. This meant that composers could now create their own complex synthesizers and effects processors using only a general-purpose computer like the Macintosh.

The basic language of Max is that of a data-flow system: Max programs (named patches) are made by arranging and connecting building-blocks of objects within a patcher, or visual canvas. These objects act as self-contained programs (in reality, they are dynamically linked libraries), each of which may receive input (through one or more visual inlets), generate output (through visual outlets), or both. Objects pass messages from their outlets to the inlets of connected objects. Max is typically learned through acquiring a vocabulary of objects and how they function within a patcher; for example, the metroobject functions as a simple metronome, and the random object generates random integers. Most objects are non-graphical, consisting only of an object’s name and several arguments-attributes (in essence class properties) typed into an object box. Other objects are graphical, including sliders, number boxes, dials, table editors, pull-down menus, buttons, and other objects for running the program interactively. Max/MSP/Jitter comes with about 600 of these objects as the standard package; extensions to the program can be written by third-party developers as Max patchers (e.g. by encapsulating some of the functionality of a patcher into a sub-program that is itself a Max patch), or as objects written in C, C++, Java, or JavaScript.

Max is a live performance environment whose real power comes from combining fast timing, real time audio processing, and easy connections to the outside world like MIDI, OSC, sensors, and hardware. Max is modular, with many routines implemented as shared libraries, and it includes an API that lets third party developers create new capabilities as external objects and packages. That extensibility is exactly what produced a large community: people can invent tools, share them, and build and remix each other’s work, so the software keeps expanding beyond what the core program ships with. In practice, Max can function as an instrument, an effects processor, a controller brain, an installation engine, or a full audiovisual system, and it is often described as a common language for interactive music performance software.

Cycling ’74 formalized video as a core part of Max when they released Jitter alongside Max 4 in 2003, adding real time video, OpenGL based graphics, and matrix style processing so artists could treat images like signals and build custom audiovisual effects. Later, Max4Live pushed this ecosystem into a much larger music community by embedding Max/MSP directly inside Ableton Live Suite, so producers could design their own instruments and effects, automate and modulate parameters, and even integrate hardware control, all while working inside a mainstream performance and production workflow.

Max/MSP and Max/Jitter helped normalize the idea that you can build and modify a performance instrument while it is running, not just “play” a finished instrument. This is the ethos of live coding – to “show us your screens” and treat computers and programs as mutable instruments.

With the increased integration of laptop computers into live music performance (in electronic music and elsewhere), Max/MSP and Max/Jitter received attention as a development environment available to those serious about laptop music/video performance. Max is now commonly used for real-time audio and video synthesis and processing, whether that means customizing MIDI controls, creating new synths or sampler devices (especially with Max4Live), or just creating entire generative performances within Max/MSP itself. Live reports and interviews repeatedly framed their shows and laptops running Max MSP, which helped cement the idea that algorithmic structure and realtime patch behavior can be the performance. In short, the laptop became a serious techno performance instrument. In order to document the evolution of Max/MSP/Jitter in popular culture, I compiled a series of notable live performances and projects where Max was a central part of the setup, inspiring their fans to use Max for their own music-making performances and practices. Max because a central programming language between artists who performed on the stage and in the basement, uniting an entire community around live computer music.

Video of Someone’s Algorave in Brazil

Monolake live at Ego Düsseldorf, June 5, 1999

“This is a live recording, captured at Ego club in Duesseldorf, June 5 1999. The music has been created with a self written step sequencer, the PX-18, controlling a basic sample player and effects engine, all done in Max MSP, running on a Powerbook G3. The step sequencer had some unique features, e.g. the ability to switch patterns independently in each track, which later became an important part of a certain music software” from RobertHenke.com.“Flint’s was premiered San Francisco Electronic Music Festival 2000. Created and performed on a Mac SE-30 using Max/MSP with samples prepped in Sound Designer II software. A somewhat different version of the piece appeared on the 2007 release Al-Noor on the Intone label” from “Unseen Worlds.”

The group built their shows around laptops running Max MSP, with the show being the real time processing of a system rather than fixed playback.

He used Max to create live music from Nike shoes, and generally talked about how he likes using Max MSP and Jitter to map real time physical oscillations (like grooves and needles – or the bending of shoes) into live audiovisual outcomes.

MaxMSP allows for a collective performance format, such as laptop orchestras and networked ensembles. Princeton Laptop Orchestra lists MaxMSP among the core environments used to build the meta instruments played in ensemble, turning patching into a social musical practice, not just an individual studio practice.

By highlighting MaxMSP/Jitter’s centrality within a wider canon of musicians and subcultures, and linking examples of its development through time, I hope I have demonstrated how Max has contributed to community building around live computer music. Both MaxMSP and Live Coding express the same underlying artistic proposition that the program is the performance, but it lowers the barrier for people who think in systems and signal flow rather than syntax, and it scales all the way from DIY one person rigs to commercial mixed music productions to mass user communities.

Computers are very intimidating animals to me. Because of this, I especially appreciated Max/MSP for a couple reasons: One, because it’s so prolific within the live coding/music community, like Arduino, there is an inexhaustible well of resources to draw from to get started. For every problem or idea you have, there is a Youtube tutorial or a reddit/Cycling ’74/Facebook forum to help you out. The extensive community support and engagement makes Max extremely accessible and approachable, and promotes outreach between both beginners and serious artists. Two, Max gives immediate feedback that easily hooks and engages you.

I liked using Jitter, because visuals are just so fun. This is max patch I made some time ago, where I would feel old camcorder videos into various filters and automatically get colorful, glitchy videos.

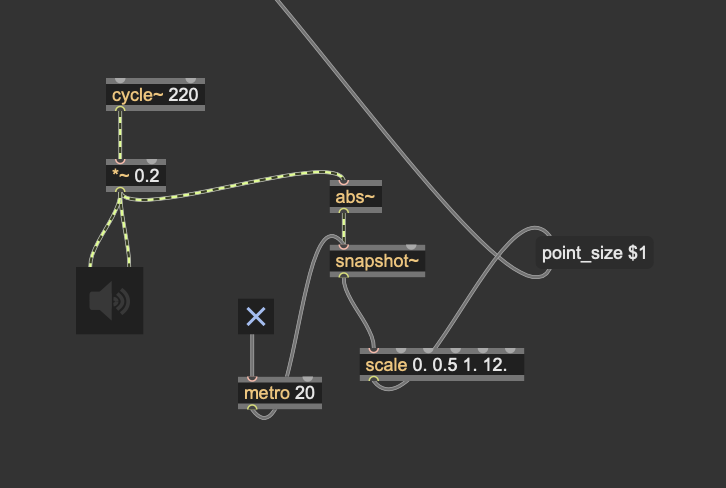

But for the purposes of this demonstration, I wanted to create a patch where visuals and sound were reacting to each other somehow. I searched up cool Max jitter patches and found a particle system box on Github, linked here: https://github.com/FedFod/Max-MSP-Jitter/blob/master/Particles_box.maxpat. Then, I searched up how I could make a basic sound that affects the size of particles, making them react to the noise realtime. I created the input object or sound (cycle), connected it to the volume (the wave cycles between those parameters, in this case -0.2 and +0.2) and the output object is the speaker, so we can hear it. In order to link this sound to the jitter patch, metro tells the transition objects to read the signal. I connected the sound signal to abs, snapshot and scale to remap it into measurements/numbers that can be read by “point-size” and visualized in jitter. Toggling the metro off stops “point size” from reading the measurements from the audio signal, severing the connection between the sound and the particles, as I demonstrate in my video. The result is, the louder the sound, the bigger the particles and vice versa. I liked using Max because it was extremely helpful to make the “physical” connections and see how an effect is created by visually seeing the signal flow, and more easily understanding how audio can be translated to visual signals in real time.

It also has my references/resources and links.

My Patch Code

{

"patcher": {

"fileversion": 1,

"appversion": {

"major": 8,

"minor": 6,

"revision": 0,

"architecture": "x64"

},

"classnamespace": "box",

"rect": [0.0, 0.0, 820.0, 520.0],

"bglocked": 0,

"openinpresentation": 0,

"default_fontsize": 12.0,

"default_fontface": 0,

"default_fontname": "Arial",

"gridonopen": 1,

"gridsize": [15.0, 15.0],

"gridsnaponopen": 1,

"toolbarvisible": 1,

"boxanimatetime": 200,

"imprint": 0,

"enablehscroll": 1,

"enablevscroll": 1,

"boxes": [

{

"box": {

"id": "obj-1",

"maxclass": "newobj",

"text": "cycle~ 220",

"patching_rect": [110.0, 70.0, 80.0, 22.0]

}

},

{

"box": {

"id": "obj-2",

"maxclass": "newobj",

"text": "*~ 0.2",

"patching_rect": [110.0, 110.0, 55.0, 22.0]

}

},

{

"box": {

"id": "obj-3",

"maxclass": "ezdac~",

"patching_rect": [90.0, 165.0, 45.0, 45.0]

}

},

{

"box": {

"id": "obj-4",

"maxclass": "newobj",

"text": "abs~",

"patching_rect": [310.0, 110.0, 45.0, 22.0]

}

},

{

"box": {

"id": "obj-5",

"maxclass": "newobj",

"text": "snapshot~",

"patching_rect": [310.0, 150.0, 70.0, 22.0]

}

},

{

"box": {

"id": "obj-6",

"maxclass": "toggle",

"patching_rect": [240.0, 250.0, 24.0, 24.0]

}

},

{

"box": {

"id": "obj-7",

"maxclass": "newobj",

"text": "metro 20",

"patching_rect": [280.0, 250.0, 62.0, 22.0]

}

},

{

"box": {

"id": "obj-8",

"maxclass": "newobj",

"text": "scale 0. 0.5 1. 12.",

"patching_rect": [410.0, 220.0, 150.0, 22.0]

}

},

{

"box": {

"id": "obj-9",

"maxclass": "message",

"text": "point_size $1",

"patching_rect": [600.0, 220.0, 95.0, 22.0]

}

},

{

"box": {

"id": "obj-10",

"maxclass": "outlet",

"patching_rect": [725.0, 222.0, 20.0, 20.0]

}

}

],

"lines": [

{

"patchline": {

"source": ["obj-1", 0],

"destination": ["obj-2", 0]

}

},

{

"patchline": {

"source": ["obj-2", 0],

"destination": ["obj-3", 0]

}

},

{

"patchline": {

"source": ["obj-2", 0],

"destination": ["obj-3", 1]

}

},

{

"patchline": {

"source": ["obj-2", 0],

"destination": ["obj-4", 0]

}

},

{

"patchline": {

"source": ["obj-4", 0],

"destination": ["obj-5", 0]

}

},

{

"patchline": {

"source": ["obj-6", 0],

"destination": ["obj-7", 0]

}

},

{

"patchline": {

"source": ["obj-7", 0],

"destination": ["obj-5", 1]

}

},

{

"patchline": {

"source": ["obj-5", 0],

"destination": ["obj-8", 0]

}

},

{

"patchline": {

"source": ["obj-8", 0],

"destination": ["obj-9", 0]

}

},

{

"patchline": {

"source": ["obj-9", 0],

"destination": ["obj-10", 0]

}

}

]

}

}

I overall appreciated how the reading emphasized music’s inextricability from the body. Because we grew up ensconced in Western philosophies (pointing fingers at you Plato & Descartes & Kant), I believe we, albeit subconsciously, mistakenly divide the mind and the body. The lofty Mozart-esque realm of music seems more associated with “the mind” while dance belongs to the realm of the body, but if we look within, I believe we all intuitively understand that the gap was never there. But the historical assumption of that gap is why this reading exists in the first place, which it outright acknowledges: “I am arguing that a significant component of such a process occurs along a musical dimension that is non-notatable in Western terms – namely, what I have been calling microtiming.” That’s why I had to laugh when I read: “Though these arguments are quite speculative, it is plausible that there is an important relationship between the backbeat and the body, informed by the African-American cultural model of the ring shout.” Modern academia – always the cautious skeptic, for better and worse. Also always the exclusionary imperialist. Like, oh you finally caught up! (Not speaking to the reader, just speaking in general.) The idea of the drum set as an extension of the body makes complete sense. The bass drum at the feet, stable and steady. The snare at the hands, which, with their greater dexterity, can more readily linger or attack, flavoring the music, giving it “that feel.” Literally our feel.

There were some phrases I really liked that particularly spoke to this: “It is a miniscule adjustment at the level of the tactus, rather than the substantial fractional shift of rhythmic subdivisions in swing.” I also loved this quote: “It seems plausible that the optimum snare-drum offset that we call the “pocket” is that precise rhythmic position that maximizes the accentual effect of a delay without upsetting the ongoing sense of pulse. This involves the balance of two opposing forces: the force of regularity that resists delay, and the backbeat accentuation that demands delay.” I also love how everything “seems plausible” hahaha. I also really loved this phrase: “bears the micro-rhythmic traces of embodiment…”

I was thinking of a couple things. One, what is the source of the pulse? Our breathing, our heartbeat, walking, running, how rocks feel on a hot day. Two, the main point of the reading, how to reconcile computers with the music of our bodies. The reading goes into several methods people have used to do this, the best of which, to me, was when it went over how DJs sampled songs by scratching records, and how the music is the material manifestation of the movements of the hands themselves. See here: “…bears a direct sonic resemblance to the physical motion involved” and “causing it to refer instead to the physical materiality of the vinyl-record medium, and more importantly to the embodiment, dexterity and skill of its manipulator.” These are just really great observations.

So when it comes to computers? Where to start? I had a conversation with dad I still remember a year ago. He said our phones are stupidly made because they’re made for our eyes, to please the Kantian aesthetes in us hahah. If they were really made for our hands, they would be designed like small conch shells. Look at the antiquated wall phone, how slenderly it wrapped itself inside your palm. I’m trying to say that the devices we use today were not built for us. (The divorce we made between the body and the mind is hurting us.) The computer is inherently disembodied, and we all know this. This is why I really like hyper pop, because its very sound contains the shifting disembodiment of a generation, yet, our inviolable presence throughout. It is us dancing through the divorce hahah. None of this is bad, it all just tells the story. So yeah, I’ll employ the tips and tricks the reading offered. Mostly, I will focus on the computer’s liaison with my hands.

this was a great reading, and captured precisely why I am grateful I majored in this hodgepodge field despite kind of sucking. I read a book about mycelium-inspired-anarchy a little over a year ago, and a lot of the aspirations expressed in this reading echoed it. Through live coding, we can discover what we should cherish and encourage within ourselves and among each other. For example, this resistance towards being defined, of having to be boxed in. Running away from the idea that you have to know or control something to love it. It is possible to love even if you can’t do both those things, which is fearlessness I guess. Unashamed love. I am still trying to get around to this idea regarding this major, and actually, probably regarding everything, now. I’ve been getting into rituals and these sorts of things, but I acknowledge that live coding can be another practice in order to lean into that way of embodied living. It actually is probably a good thing for me to do, which is why I took this class. Get more comfortable opening up in the world, real time! Literally real time. I’m a reader, and I think this always gave me a sense of safety and distance. I liked processing and analyzing things from afar, and pressure is probably one of the scariest things to me. So truly, this idea of REAL TIME. We’re all here right now. I’m 22, and I never expected to get this far, and the fact that I’m 22 and still required to figure it out as I go is insane to me. Like, I can never quite wrap my head around the absurdity of it. It’s all really real-time. How does live coding open up? How do you open up? We’ll see, I guess.

I also really liked the idea of “thinking in public.” I am a bit ashamed to admit that I have incel-tendencies. But people are not something you should be afraid of. I think I learned this because of the times I am in, but this idea of thinking in public really resonated with me. Like it’s no big deal. In fact, being around people is magic, and leads to magical times. Sweat and breath in a dance room, you know?

Something else that hit was this quote: “This way of computing . . . helps me ‘unthink’ the engineering I do as my day job. It allows for a relationship with computers where they are more like plants, rewarding cultivation and experimentation.” I think, in the modern world, and for a lot of human history, we maintain the relationships that we are required to to survive, but we also know there is a better way of being in the world that feels like beauty, feels lighter, and feels true. Live coding is a way to be that way, I understand. Sort of a release, something you GET TO DO rather than something YOU HAVE TO DO.

I also really liked the idea of presenting “familiar things in a strange way.” I think a lot of things look dead to us and we have to shake things up to recognize them as alive. It goes hand in hand with this idea: “This is a problem for her (and us) inasmuch as Big Tech wants computers to be invisible so our experience of using them becomes seemingly natural.” I like minimalism, but it is also a trap of invisibility and complacency. A really well-designed snare. So keeping things strange and LOUD and VISIBLE appeals to me. And the point of all this is, exactly as was written here: “The capacity of live coding for making visible counters the smart paradigm in which coding and everyday life are drawn together in ways that become imperceptible. The invisibility here operates like ideology, where lived experience appears increasingly programmed, and we hardly notice how ideology is working on us, if we follow this logic, then we do not use computers; they use us.” How do you learn to SEE what can’t be seen? How do you learn to acknowledge that it takes two to tango and it’s not just you running things? I think live coding makes you sharper, or rounds out the depth in the back of your eyes. There’s this great Susanne Sundfor quote: “We don’t do life. Life does us.” And yep, just about. I don’t believe it in my bones yet. Another reason why I took this class.

And the last thing I want to write is that the nature of computers is soooo hyper hyper intimate. It’s like your pet dog except that dog is a mirror and a portal to any world you want to go to. A magical object! The art that has come out of computers consequently has this…feel. It feels kind of windy and spicy, and like an igloo. But then bringing that hyper super intimacy into a public space…? It’s kind of like you’re opening up that intimacy to everyone. It’s safe vulnerability. So wow.